Artificial intelligence is swiftly becoming a crucial component in court operations and the practice of law. Despite its increasing role in courtrooms across the country, many legal professionals still grapple with essential inquiries regarding its responsible implementation, possible risks, and practical uses.

Based on our extensive research into AI hallucinations and their usage in courts, along with insights gathered from 17 interviews with judges, legal experts, and technology specialists, we aim to address the most vital questions that confront today’s legal professionals.

Jump to ↓

What are the real risks of AI hallucinations in legal practice?

How can courts balance AI adoption with accuracy requirements?

What does integrating AI into legal workflows look like?

How can AI improve access to justice?

What does responsible AI implementation look like?

Moving forward with AI in the courts

What are the real risks of AI hallucinations in legal practice?

AI hallucinations—situations where an AI system generates content that appears credible but is factually incorrect—pose significant risks for legal professionals. These inaccuracies may include referencing nonexistent cases, concocting statistics, delivering inconsistent information, or providing misleading legal conclusions.

A notable concern is that AI may produce mistakes that a human would never commit, such as erroneously adding an element to a cause of action that consists of only three components. Such mistakes can easily evade traditional review processes, creating latent vulnerabilities in legal work.

Case studies illustrate the potential consequences. For example, in Mata v. Avianca, Inc., legal counsel relied on hallucinated case law produced by an AI tool, inaccurately asserting its reliability and even submitting fabricated excerpts containing non-existent judicial decisions. Similarly, in Shahid v. Esaam, a judge unwittingly included fabricated citations in an order, fully trusting the AI-generated content of an attorney without independent verification. These incidents underscore how quickly misinformation can infiltrate the judicial record when AI outputs are not meticulously scrutinized.

How can courts balance AI adoption with accuracy requirements?

Ensuring accuracy through verification and human oversight

The solution lies not in shunning AI but in implementing stringent verification protocols complemented by human oversight at every stage. Professional-grade AI solutions like CoCounsel Legal are deliberately crafted to tackle these issues through:

- Built-in verification features that cross-check against authoritative legal databases

- Citation validation capabilities that ensure the correctness of case law references

- Transparent sourcing that enables users to trace AI-generated content back to original sources

- Specialized training focused on legal reasoning standards rather than generic linguistic patterns

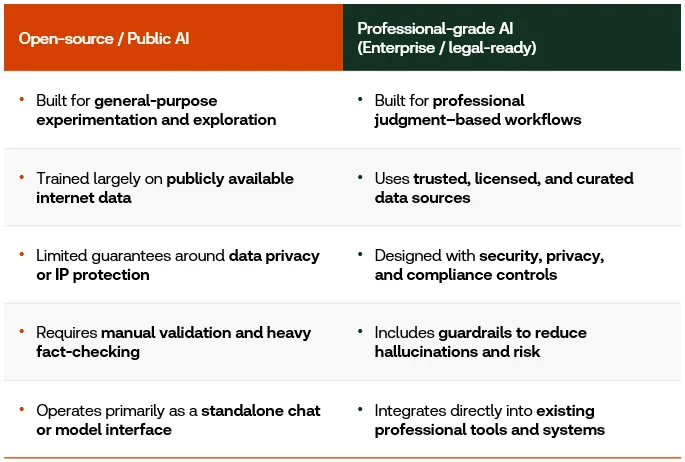

Identifying professional-grade AI versus public AI

Professional-grade AI platforms like CoCounsel Legal come equipped with critical safeguards that are often missing in consumer-focused alternatives:

What does integrating AI into legal workflows look like?

AI as an assistant, not an arbiter

AI should augment—rather than replace—legal judgment. As Judge Samuel A. Thumma of the Arizona Court of Appeals warns,

|

– Judge Samuel A. Thumma of the Arizona Court of Appeals

AI functions most effectively when viewed as a research assistant that enhances professional judgment rather than substitutes for it. Thomson Reuters Chief Technology Officer Joel Hron describes this role as acting as “a thought partner or a critic,” aiding in testing assumptions and broadening perspectives.

Verification protocols courts should implement

Courts should establish comprehensive validation processes that include:

Mandatory review procedures

- Independent verification of all AI-generated citations against authoritative databases

- Confirmation that quoted passages are accurate and properly contextualized

- Validation ensuring that legal propositions align with the actual holdings of cited authorities

Technology-assisted verification

Professional legal research providers now offer tools explicitly designed to scrutinize briefs, judicial orders, and opinions for accurate case citations and quotes. These automated verification systems can flag potential concerns for human review while maintaining workflow efficiency.

Training and education

Judicial bodies should establish comprehensive educational programs to ensure that all individuals interacting with the legal system possess a foundational understanding of AI and its proper application in legal contexts. While technical expertise isn’t necessary, a basic comprehension of AI fundamentals is vital for the responsible integration of these tools into judicial processes.

The AI Policy Consortium for Law & Courts, a collaboration between the Thomson Reuters Institute and the National Center for State Courts, offers a role-based learning toolkit aimed at enhancing AI literacy within the courts.

How can AI improve access to justice?

With appropriate safeguards, AI tools can significantly enhance access to justice. Judge Maritza Braswell has underscored that avoiding AI altogether only exacerbates the challenges faced by individuals attempting to navigate the legal system without professional assistance.

Professional legal AI platforms can assist self-represented litigants by:

- Translating complex legal concepts into straightforward language

- Offering procedural guidance

- Facilitating basic document preparation

- Providing educational resources that clarify legal processes

However, it is crucial to emphasize that improved access does not automatically ensure fair outcomes. The objective must be to pair greater access with tools and processes that promote true justice.

What does responsible AI implementation look like?

Responsible AI deployment in legal settings necessitates three primary components:

Human-centric approach. AI must be supervised by humans, never functioning as an autonomous decision-maker. Legal professionals bear ultimate responsibility for accuracy, verification, and professional judgment.

Professional-grade tools. Investing in legal-specific AI platforms equipped with built-in safeguards is imperative. Generic consumer tools lack the necessary verification features, legal training, and professional standards for use in courts.

Integrated verification workflows. Verification procedures should be woven into the entirety of the AI lifecycle, rather than being treated as a final check. This includes validation checkpoints during prompt formulation, initial output review, and prior to final submission.

Moving forward with AI in the courts

The legal profession does not need to start anew. Existing ethical frameworks and professional standards surrounding competence, due diligence, and candor to the court provide a solid foundation for the integration of AI.

Choosing the right technology partners is essential. CoCounsel Legal embodies the professional-grade approach that courts require. It is an AI system specifically trained in legal reasoning, built with verification safeguards, and designed to enhance rather than supplant human judgment. As Justice Tanya R. Kennedy of the Appellate Division, First Judicial Department of New York reminds us, “Whether you are a judge or an attorney, credibility is everything, particularly when you come before the court.”

Professional-grade AI helps maintain that credibility while unlocking unparalleled efficiency and capability. Discover how CoCounsel Legal’s professional-grade AI can elevate your practice with verified, reliable outputs tailored for legal work.