Generative AI, which has been around since the 1960s, exemplifies a significant evolution in technology. The journey began with Joseph Weizenbaum’s creation of ELIZA, an early program designed to simulate human-like conversations. Today, generative AI encompasses advanced machine learning models capable of creating various types of content, such as text, images, audio, and code. These models learn patterns from extensive datasets, allowing them to generate original content in response to user inputs.

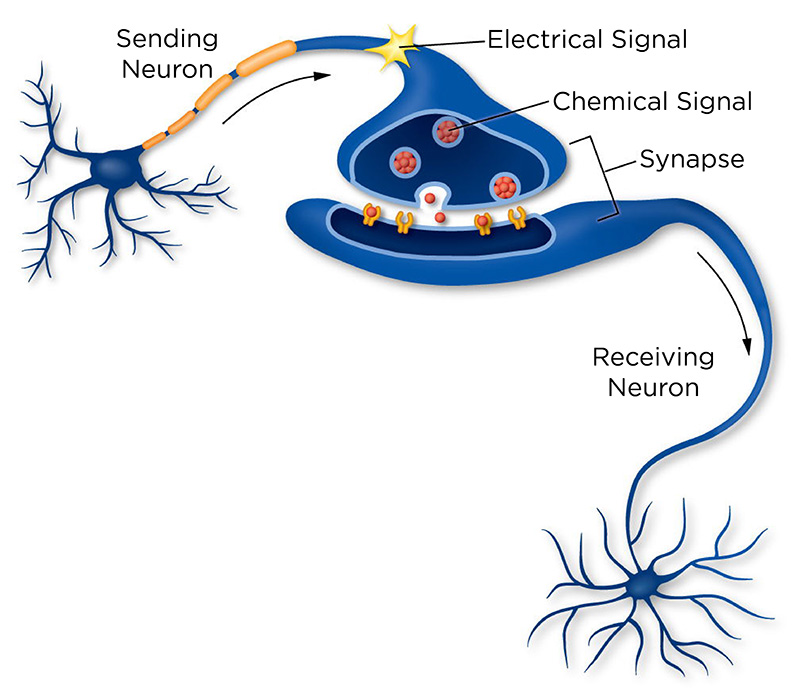

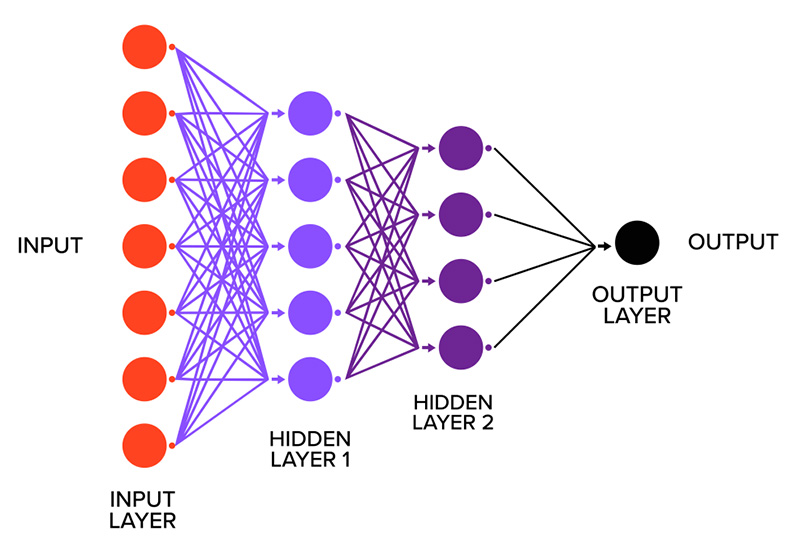

To grasp the significant growth and implications of generative AI, as well as its underlying mechanics, it’s crucial to explore the evolution of models known as artificial neural networks (ANNs). Inspired by the workings of the human brain—where billions of interconnected neurons transmit signals that enable thought, movement, and decision-making—ANNs simplify this concept by using interconnected units, or “neurons”, organized in layers that process information and learn from data.1 A pivotal development in this field was the introduction of deep neural networks, which utilize multiple layers to discern complex patterns far beyond the capabilities of earlier models. The evolution of ANNs led to the use of two primary data modeling approaches: discriminative and generative models. Discriminative models are primarily focused on prediction tasks, identifying relationships between inputs and outputs for classification. On the other hand, generative models aim to understand how data is generated by capturing its underlying distribution, enabling the creation of new examples that mirror the original data.2

Significant advancements in models such as variational autoencoders (VAEs), generative adversarial networks (GANs), diffusion models, and transformers have led to a remarkable expansion in AI’s capacity to generate realistic data. The introduction of foundation models was a breakthrough for generative systems, leading to the large-scale generative AI we see today. Unlike previous models designed for specific tasks, foundation models learn general patterns by engaging in numerous “fill in the blank” exercises, predicting the next word in a sentence or the next section of an image. This training enables the model to create compact and meaningful representations of concepts and relationships within data, resembling internal maps showcasing how different pieces of information are interconnected. The training process for these models is resource-heavy, but once they develop strong representations, they are capable of generating new forms of content, including text, images, and code. These foundation models serve as the cornerstone of generative AI as we know it.

The launch of ChatGPT in November 2022 marked a significant turning point in the mainstream acceptance of generative AI. Following this, major tech companies quickly integrated generative AI tools into widely used platforms such as Microsoft 365 Copilot, Meta AI, and Google’s Gemini in Google Workspace and Search. Beyond productivity and search enhancements, generative AI is increasingly applied in various fields, including healthcare and education. For instance, Ambient AI creates clinical documentation from clinician-patient discussions, while tools like Khan Academy’s Khanmigo assist with grading and tutoring. Consequently, generative AI has become an integral part of everyday life, representing a type of default AI that operates even when users are not directly seeking it, reshaping how we engage with digital platforms. This growing integration raises essential questions about governance, focusing on transparency, accountability, and public benefit while addressing potential risks and upholding trust.

SOURCE: “Neurons Transmit Messages In the Brain,” University of Utah, https://learn.genetics.utah.edu/content/neuroscience/neurons/ (top); “Deep Learning vs. Neural Network: What’s the Difference?,” Smartboost, https://www.smartboost.com/blog/deep-learningvs-neural-network-whatsthe-difference/ (bottom).

Potential Impacts or Issues of Concern

While the benefits of generative AI are evident, its integration also raises significant concerns—some intentional, while others may be unintended. Here are several noteworthy issues:

- Bias—Bias can emerge from both the training data and the values of those who developed the systems. Given that large language models (LLMs) are trained on extensive and varied datasets, they can unintentionally reflect pre-existing societal biases. Confirmation bias from users can also play a role; their prompts or phrasing might influence model responses, with earlier interactions affecting subsequent ones over time.

- Ownership—Since data is crucial for generative AI, it raises questions about ownership and authorship. While outputs from AI often appear to be content without ownership claims, they are frequently derived from existing works without proper consent, credit, or compensation, often referred to as the 3 C’s. This presents challenges related to intellectual property, particularly for creative professionals.

- Overreliance—While generative AI can enhance efficiency through prompt responses, it poses risks of overdependence. Users may adopt cognitive shortcuts, sacrificing thorough analysis for convenience. This shift could stunt skills such as creativity, critical thinking, and problem-solving, reinforcing automation bias due to an ingrained trust in AI recommendations. Among younger demographics, such as high school students, the potential long-term ramifications of overreliance could differ notably from those of older individuals, affecting cognitive development. This age group may also resort to AI for digital therapeutic support, potentially delaying engagement with qualified human professionals.

- Misuse—Similar to many forms of technology, generative AI can be exploited by individuals with ill intentions, allowing them to leverage capabilities with minimal technical know-how. One notable concern is deepfakes, where faces, voices, or texts are manipulated to create harmful or defamatory media, threatening individuals, institutions, and public trust.

- Environmental Health Impacts—As the use of AI expands, its environmental impact escalates. Data centers, which contain the servers and networks necessary for training, deploying, and operating AI systems, consume vast amounts of energy, contributing to carbon emissions and requiring significant water resources for cooling.

ABOUT THE AUTHOR(S)

Sadia Rahman is a New York State Science Fellow at the Rockefeller Institute of Government.

Nikaley Castillo is a research assistant at the Rockefeller Institute of Government.